Page History

open Page See: new STageR Content SourceTo create a new content source: on "" button on "STageR Connector"

"" pulldown select Manually and pulldown pulldown in the option rearrange unselect "" checkbox

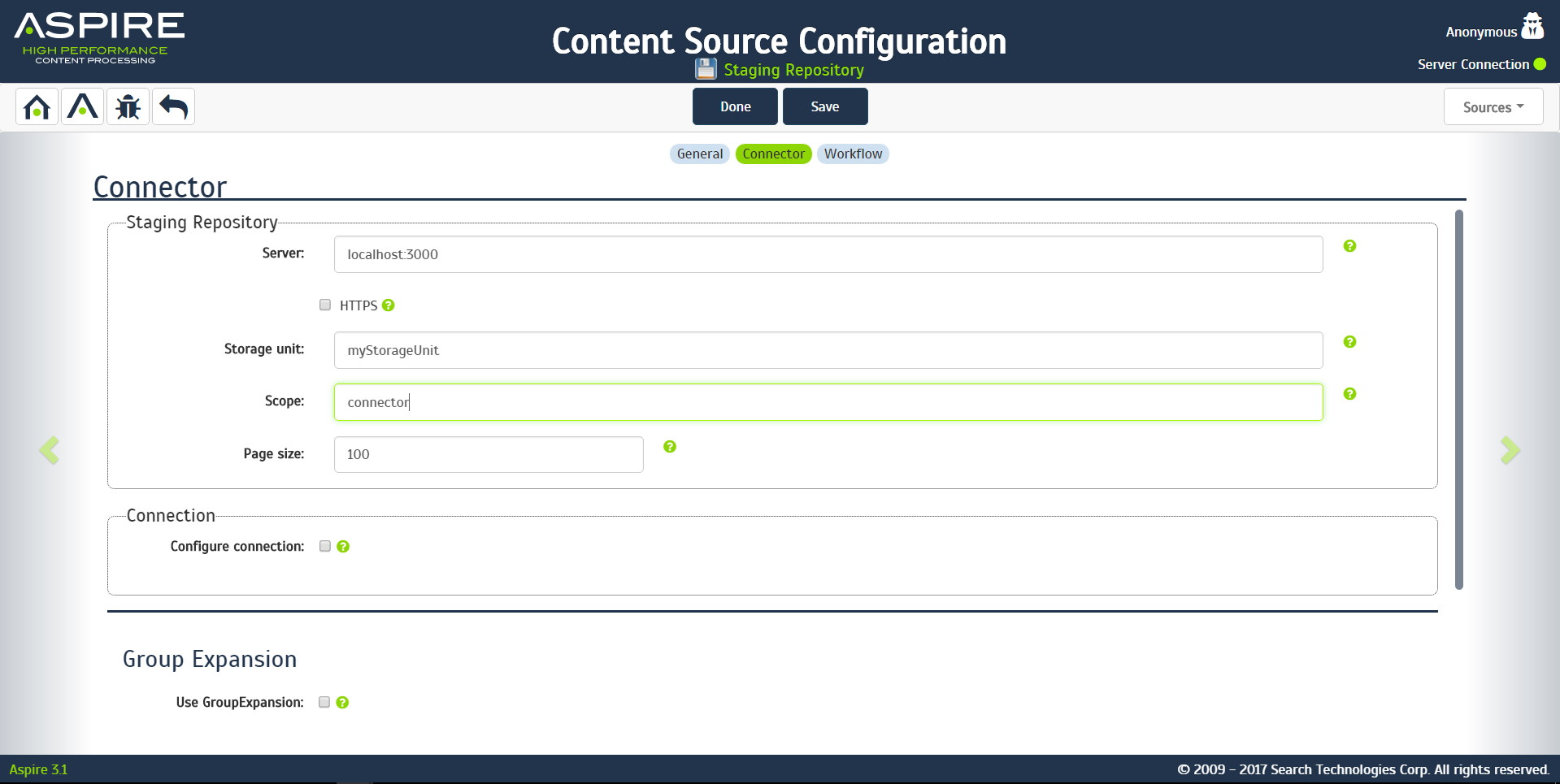

Connector Information "" the STageR.

- Example

- Example

Step 2c. Specify Workflow Information

In the "Workflow"Overview

Content Tools